A world without work: how quickly might AI come for our jobs?

And how realistic are the scariest projections?

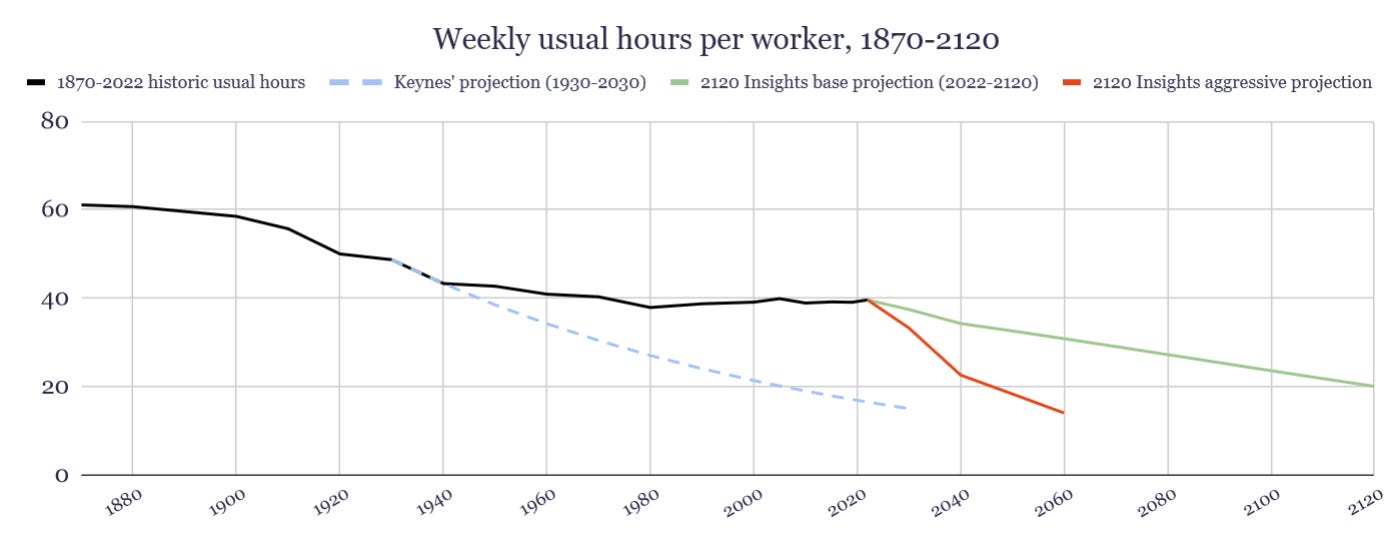

If we couldn’t get to 15-hour workweeks by 2030, what if we finally got there by 2058?

Some of you might have found last week’s base case prediction a little boring. With the possibility of transformational AI right around the corner, why would it take 100 years to get around to only cutting our working hours in half?

Indeed, the base model was a very simple one that estimated 3% labor productivity growth from now until 2040, followed by a return to normal 1.5% growth afterwards. However, community predictions of future productivity growth on Metaculus actually appear to accelerate over time. 25% of forecasters have effectively suggested over 7.3% sustained growth from 2052 through 2122! On the other hand, even these aggressive forecasters only top out at 2.8% growth in the 2020s.

The theory behind these rapid enhancements in productivity is explained a bit in another Metaculus question that asks “If human-level artificial intelligence is developed, will World GDP grow by at least 30.0% in any of the subsequent 15 years?” The question references a theory suggested byRobin Hansonthat productivity and GDP growth might dramatically accelerate around the point where machine intelligence begins to overtake that of humans.

Hanson’s 2001 paper, Economic Growth Given Machine Intelligence, has a few key points we should take a critical look at:

By 2025, give or take a few years, the cost of computing power should have continued to fall such that affordable computing hardware would be similar in power to a human brain.

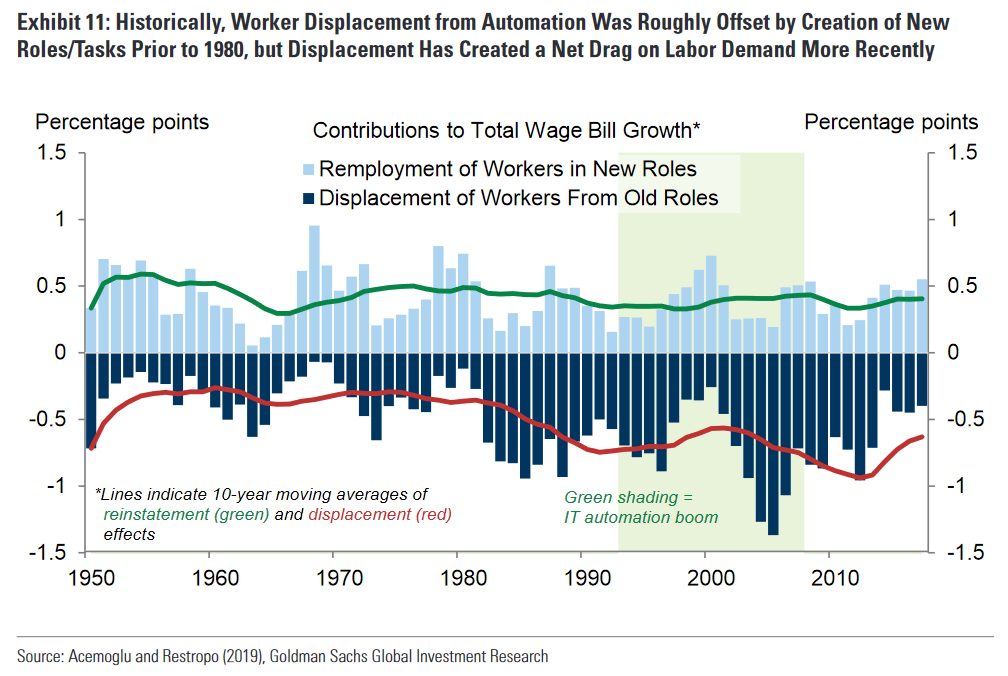

Once machines surpass human abilities, there will be an abrupt shift from machines complementing human labor to machines replacing human labor.

Once this shift occurs, the constraint of a limited pool of human labor will be removed, and the quantity of labor (now provided by machine intelligence) will increase at the rate of rapidly falling computer prices (halving every two years).

This quick scaling of machine intelligence as a labor input could cause a 45% jump in GDP growth within a year.

What does “human-level intelligence” mean?

Point #1 is very à propos for our present moment. Computing power prices have continued to fall at the rates Hanson assumed they would back in 2001. There is however considerable uncertainty about the raw processing power of a human brain at this point.

Current Metaculus forecasts suggest that intellectual parity between machines and humans is 90% likely before 2040. There are a few other forecasts of milestones along the way, such as an LLM with approximately human-level output (between now and early 2026)1 , a “weakly general” AI that can reliably explain its reasoning in answering SAT questions and play a novel video game (between mid-2024 and 2030), and finally a the release of a general AI system as proposed by Matthew Barnett that can pass a 2-hour adversarial Turing test and have the mechanical components that would allow it to physically assemble a model (between 2026 and 2045).

The shift from human to machine

If the more aggressive timelines for this turn out to be correct, Hanson’s first hypothesis will not be far off the mark. But what about his second one regarding “an abrupt shift from machines complementing human labor to machines replacing human labor”?

First, it should be acknowledged that every job differs in its complexity and our level of trust that a new technology could do it safely and effectively. Hanson does provide a way to model this complexity to some degree. According to his model, if a job at the 75th percentile of complexity is 3 times as complex as one at the 25th percentile, it would take up to 4 years for a machine intelligence to take those middle 50% of jobs rather than some sort of immediate shift.

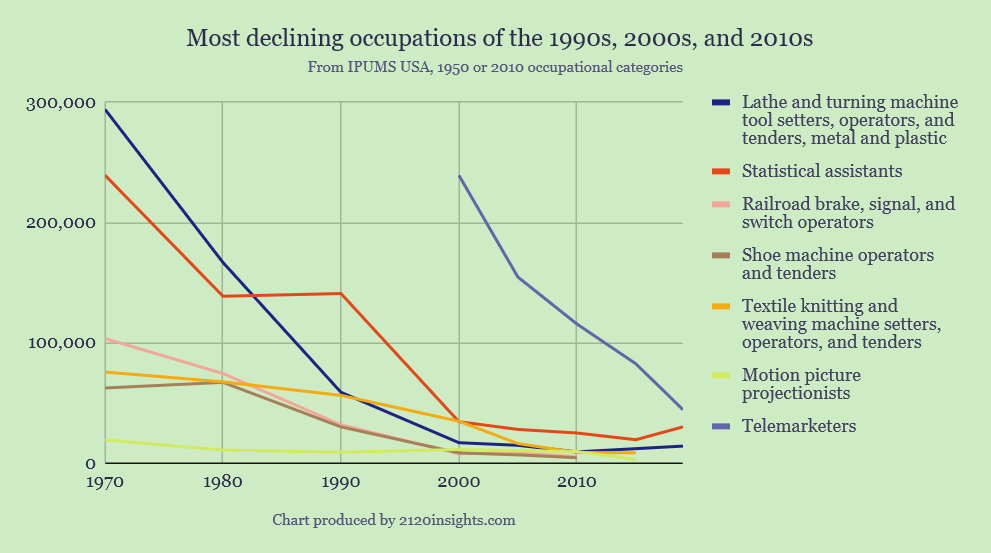

A 12.5% drop in employment in a single year is still wild. Such a decline has happened in individual occupations before: according to the Census, each decade has typically seen such a large, sustained drop among a handful of occupations: telemarketers and motion picture projectionists in the 2010s, textile workers in the 2000s, and statistical assistants in the 1990s.

That said, there has never been a collapse anywhere near this scale economy-wide. Even in a scenario where AI takes off more quickly than expected, I think there are a few factors that would keep the job market as a whole from imploding that quickly:

Excepting the spring of 2020, a general 1%+ rise in unemployment in a single month hasn’t happened since December 1953. If jobs were not shed so quickly even in other recent recessions, including in 2008, it seems unlikely for the adoption of human-replacing AI to proceed at that pace.

The various Turing tests described in the Metaculus markets might not be enough to prove that AI systems could be reliable in all of the edge cases that come up in actual jobs.

For jobs that require physical presence, there would be additional manufacturing costs on top on innovations in AI algorithms and computing power. The production of robots can only scale up so quickly, even if they were in theory capable of doing many sorts of jobs.

Some jobs may always require a human touch for reasons of alignment, regulation, and personal/market preference.

With this in mind we can look at how Hanson’s hypothesized “abrupt shift” affects three primary categories of jobs:

Jobs that can be done remotely (about 30% of the workforce): potentially impacted by transformative AI sooner.

Jobs that require physical presence, but could be automated (about 45% of the workforce): will see more direct impact from AI only after more sophisticated tests in the physical world are passed.

Job unlikely to be replaced by machines (25% of the workforce): people will always want to work to some degree, and someone is going to have to keep the machines in line.

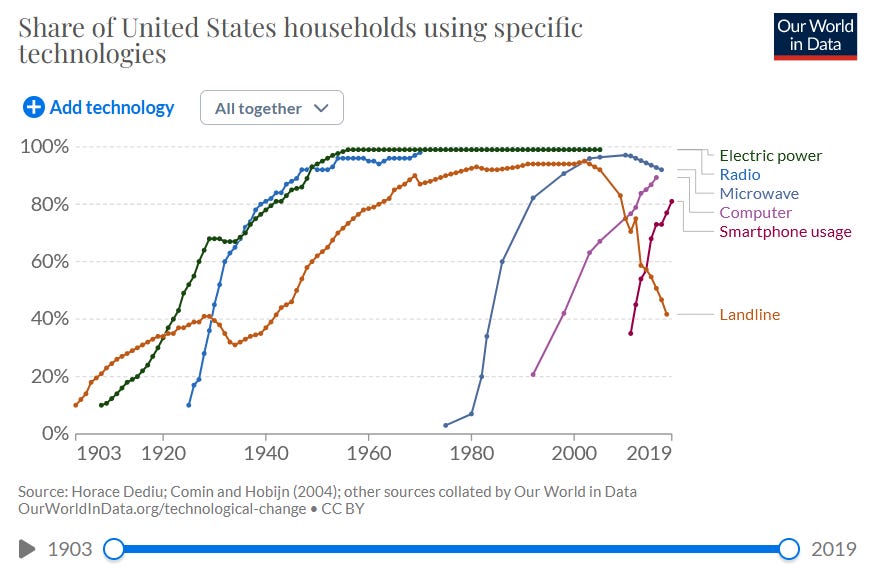

We can also imagine the pace of transformative AI compared to how quickly earlier transformative technologies were broadly adopted:

Peak adoption rate of small, immediately useful technologies is about 8% of the population per year: e.g. smartphones in the 2010s, microwaves in the 1980s, radio circa 1930.

Peak adoption rate of medium-sized tech with some learning curve is about 4% per year: e.g. PCs in the 1990s.

Peak adoption rate of technologies with sizeable infrastructural and implementation costs is about 2-3% per year: e.g. electricity in industry between 1900-1940 or landline telephones between 1940 and 1970.

What curve might adoption of labor-replacing AI tools in virtual workplaces most closely resemble? And how differently might this play out with robots in physical workplaces?

For virtual workplaces, there is a case for an adoption curve even faster than smartphones. End users don’t even need to purchase any physical equipment, and ChatGPT’s user base grew past 100 million faster than any other social network or tool. On the other hand, only 35% of its users find it to be anything more than “somewhat useful”. Like PCs in the 90s, there are issues with reliability, and also a learning curve to be able to make the most productive use of it. It may take time for generative AI tools to prove their reliability and fully integrate themselves with existing workflows. A good middle-of-the-road estimate might mean that the labor-replacing functions of AI might proceed overall at a similar rate as smartphone adoption, 8% per year.

For physical work, the adoption would likely be slower, as the complexity of introducing robots into the “real” world would probably be somewhere in between the difficulty of electrification and computerization. Let’s say that labor-replacing adoption totals 3% per year once a general AI system of this capability is released.

So, if the more aggressive predictions are true, we could start seeing a significant shift in the automation of virtual work as early as next year (2024). An 8% yearly absolute rate of technological unemployment for 30% of the workforce might only be countered by the historic re-employment rate of 0.5%, so we might expect unemployment to increase (or working hours to drop) an additional 2.25% per year overall.

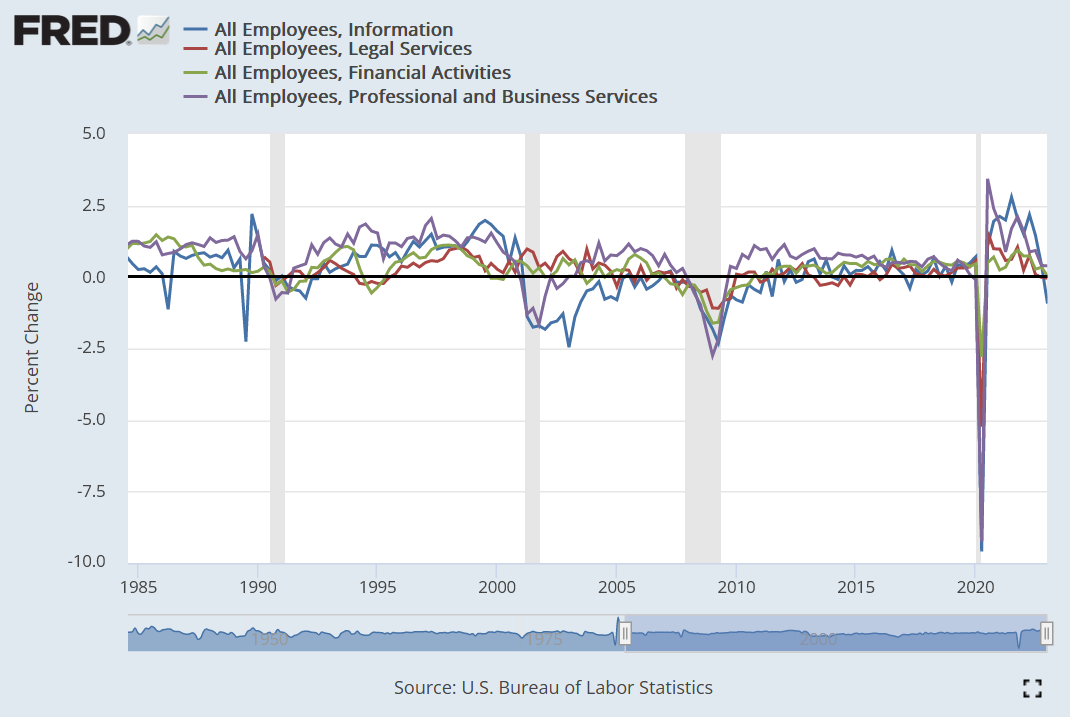

In May 2023, 4,000 people were estimated to have lost their jobs directly because of AI. And more broadly, employment in the information sector might be a canary in the coal mine for this trend. Q1 of 2023 saw a 0.93% drop, or 3.7% on an annualized basis. If this projection is accurate, we’d be looking at a decline about twice as strong as the Q1 2023 tech sector layoffs throughout the broader virtual labor market.

This would pick up starting in 2026 if a general AI capable of doing physical work is released by then. A 3% decline minus 0.5% re-employment across the other 45% of physical jobs with automation potential would result in a further 1.125% decline in employment each year. Based on these linear2 trends, by 2030, we’d see employment reduced by a total of 18%, or down to 33.3 hours per week if remaining work hours were distributed equitably amongst the (slightly smaller due to retirements) labor force. Could we make 4-day workweeks a norm by then?

At this point, nearly half of the work that can be done virtually has been taken over by AI. Assuming the same trend continues, we’d expect most of the remaining virtual work to be automated by 2037, even after taking a modest rate of re-employment into new jobs into account. With the continued decline in physical work as well, we’d either cross 20 hour weeks or 50% unemployment in the mid-2040s. If hours were equitably shared, we’d hit 15 hour weeks only 28 years later than Keynes predicted, in 2058. Better late than never, right?

It’s an open question where we might go from here. Barring any apocalyptic scenarios, perhaps the decline in work would level off as we get to the point where the only jobs that are left are the ones that we might never want to have machines do fully (25% in this model, but could be higher). And past a certain point, reducing the amount of work needed might not provide us much value anymore.

How much will AI continue to scale up after disrupting employment?

Speaking of value, we still need to address Hanson’s 3rd point. He assumes that once we cross the point of machines replacing rather than complementing human labor, that machine intelligence could continue scaling up at the rate at which computing power continues to fall, implying a potential 41% rise in these new “labor inputs” each year.

This might be true in some areas. As I run spreadsheets full of arrayformulas with many complex equations, I am benefiting from the computing power that used to take teams of statistical assistants and “human calculators”. Gaming in virtual reality could get increasingly vivid over time, and AI is already set to progress from generating images and short videos to full-length movies. But how much longer can the additional computing power provide us with more value, and at what point do diminishing returns limit further growth?

The limits to GDP growth

Hanson’s 4th point also makes a bold claim about GDP growth. But how the improvements I mentioned above would get reflected in GDP is debatable. While Google sheets might give me the value of a team of 1960s-era statistical assistants, what it produces doesn’t have anywhere near the same weight as the contributions of the people who used to do that work. Quality improvements, like those we might see in art, games, and videos would also be difficult for GDP to capture objectively.

For these reasons, I am hesitant to share an estimate for GDP growth along with this aggressive forecast of technological unemployment. The categories I used to group occupations is crude enough as it is. Measuring growth across each sector of the economy, and how AI might help satisfy other unmet needs would require a much more detailed approach which deserves its own post later.

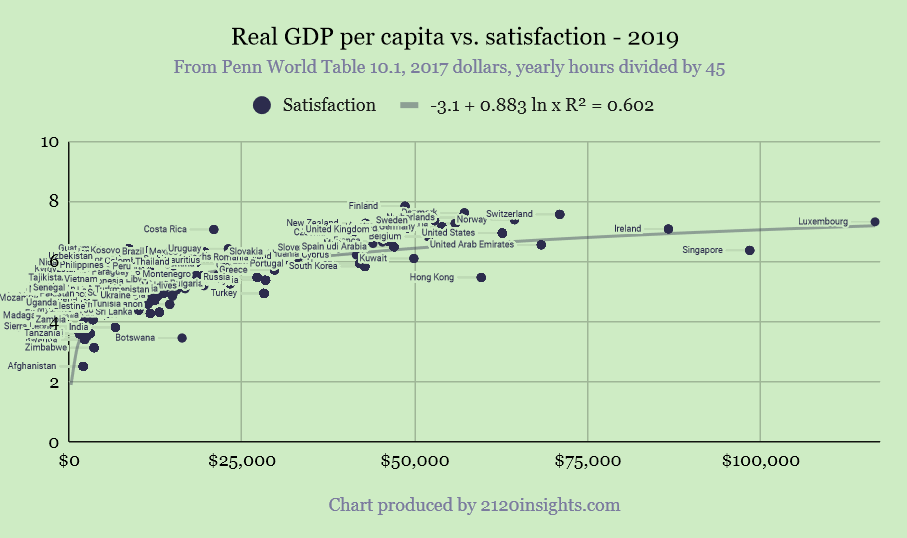

I will put forward one quick hypothesis about future GDP growth for now though. There is a well-established logarithmic relationship between GDP per capita and life satisfaction (as measured by the World Happiness Report): as GDP increases, so does satisfaction, but there are significant diminishing returns.

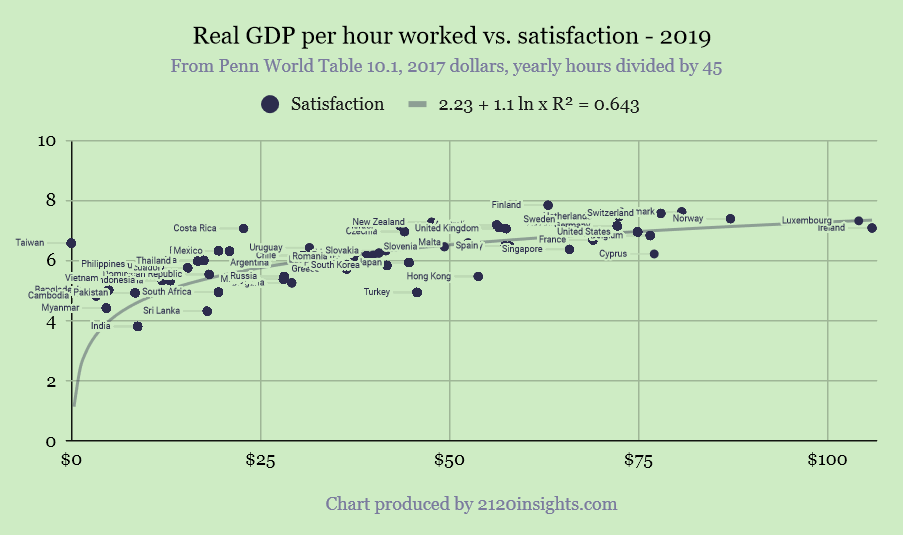

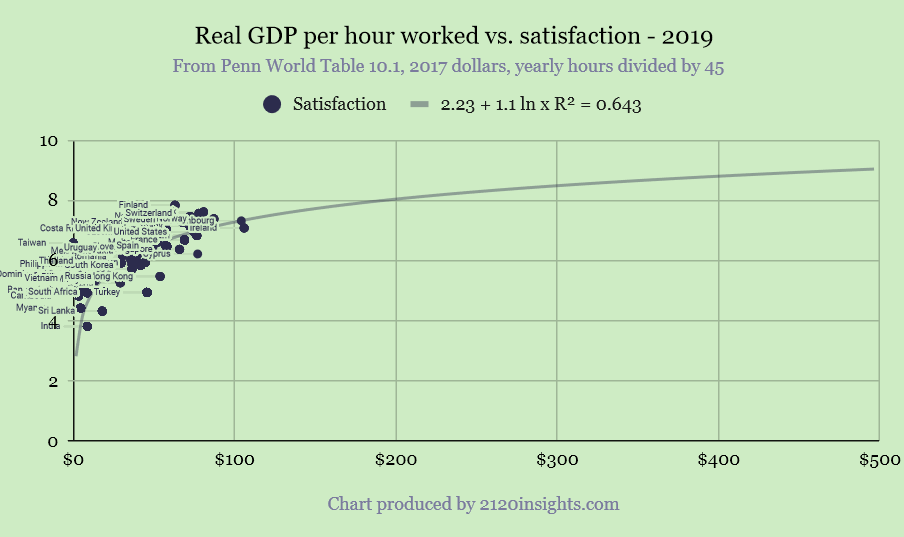

However, the relationship between GDP per hour worked and satisfaction is much closer to linear and a tighter fit.

It is still logarithmic of course, bounded by the maximum satisfaction being 10 on a 10 point scale (and realistically somewhat lower than that). But following that trend, we’d expect to hit a collective 9 at $470/hour, which seems much more achievable than the nearly $900k we’d need to achieve a similar level of satisfaction at the same number of working hours.

$470/hour somewhat less that the 75th percentile (high) forecast for 2052, so perhaps we might arrive at both an ideal workweek and ideal level of income sometime in the 2050s?

Note: GDP per hour is invariably higher than a median hourly wage. Inequality and income from capital is likely to continue to exist even in a protopia, but a GDP per hour of $470 might imply a median hourly wage of $235/hour3 for the remaining work that is done. If you made $235 per hour, how many hours would you ideally work?

Try asking this one at your next party, and maybe you’ll get an idea of what the length of the workweek we might ultimately settle on in the far future :)

In conclusion

I have of course made tons of optimistic assumptions here that I only have 25% confidence in at most. While my predictions might still seem too conservative for some futurists, they aren’t too far off Marius Hobbhahn’s. The rates of technological unemployment I’ve proposed here are higher than most economists would predict. But even if something like this scenario does come to pass, we still have to contend with:

The potential for inequality to skyrocket if the bargaining power of labor collapses due to fast technological unemployment.

Misaligned AI, or humans misusing AI which results in significant catastrophe(s). Surviving such a scenario may entail employing people in much greater numbers to keep a closer eye on potential problems.

Other “exogenous” problems that might hold back our progress such as impacts from climate change and war.

Dealing with these challenges is of course more contingent on politics than it is on technology. As bleak as questions of politics can often be, as I wrote this, I did feel optimistic that we might arrive at some imperfect solutions as the problems become clearer with time. If the headline rate of unemployment began to rise by 0.2% per month, or 0.6% per quarter, it would be immediately visible, especially if concentrated in particular industries. It wouldn’t take long for such a rise to become the top news story, and immediate economic issues are ones that politicians appear to be most sensitive to, especially if they appear to be caused by a technology that Americans already feel skeptical about. The initial solutions won’t be perfect, but I do think the pace of the problem in this scenario could be “just right” to keep the pressure on— fast enough to be taken seriously, but not so fast as to be an immediate catastrophe.

Other threats posed by AI might also arrive at the same pace as technological unemployment, and hopefully could be addressed with similar thoughtful immediacy. While I’d be out of my depth to propose solutions here, I do intend to do a few hopefully helpful things over the coming months:

First, I also want to explore the techno-pessimist case. What if transformative AI doesn’t materialize, or comes much later than we expect? What are the odds of this happening, and what does our future look like in this scenario?

Seeing monthly changes in unemployment by industry is a helpful “canary in the coal mine”, but I’d like to go deeper. How might changes in employment in specific occupations in the monthly Current Population Survey help us identify these trends sooner and with greater precision?

Going beyond the various Turing tests that I linked to earlier, I think Metaculus needs more questions that refer to percentage declines in employment in specific occupations considered most susceptible to automation.

What do you think? Please share any thoughts you might have in the comments!

The details of how “human-level output” would be measured are described here. A common observation of ChatGPT and other LLMs is that their writing style is a little more predictable and is at a lower level of “perplexity” than a human author.

I’ve assumed linear, absolute trends here. It’s likely that a logarithmic model such as the one used by Hanson is more precise, but these linear trends should approach the logarithmic-modeled ones for the crucial middle part of adoption curves, and is also easier for a general audience to understand.

If work is substantially automated, labor’s unadjusted share of income is likely to be much lower, meaning that median real wages might only be a third of this or so at best ($80/hour). But perhaps a world in the 2050s has some sort of expanded earned income tax credit that redistributes more capital income to workers, in addition to a Universal Basic Income.